Send With Confidence

Partner with the email service trusted by developers and marketers for time-savings, scalability, and delivery expertise.

Time to read: 9 minutes

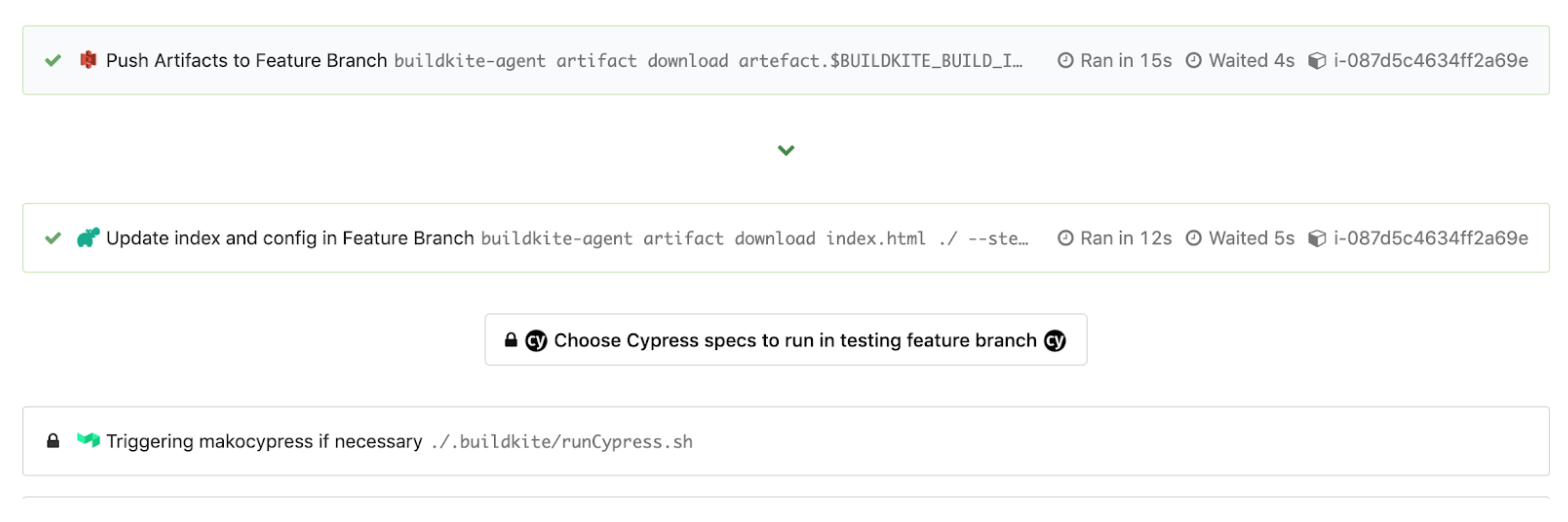

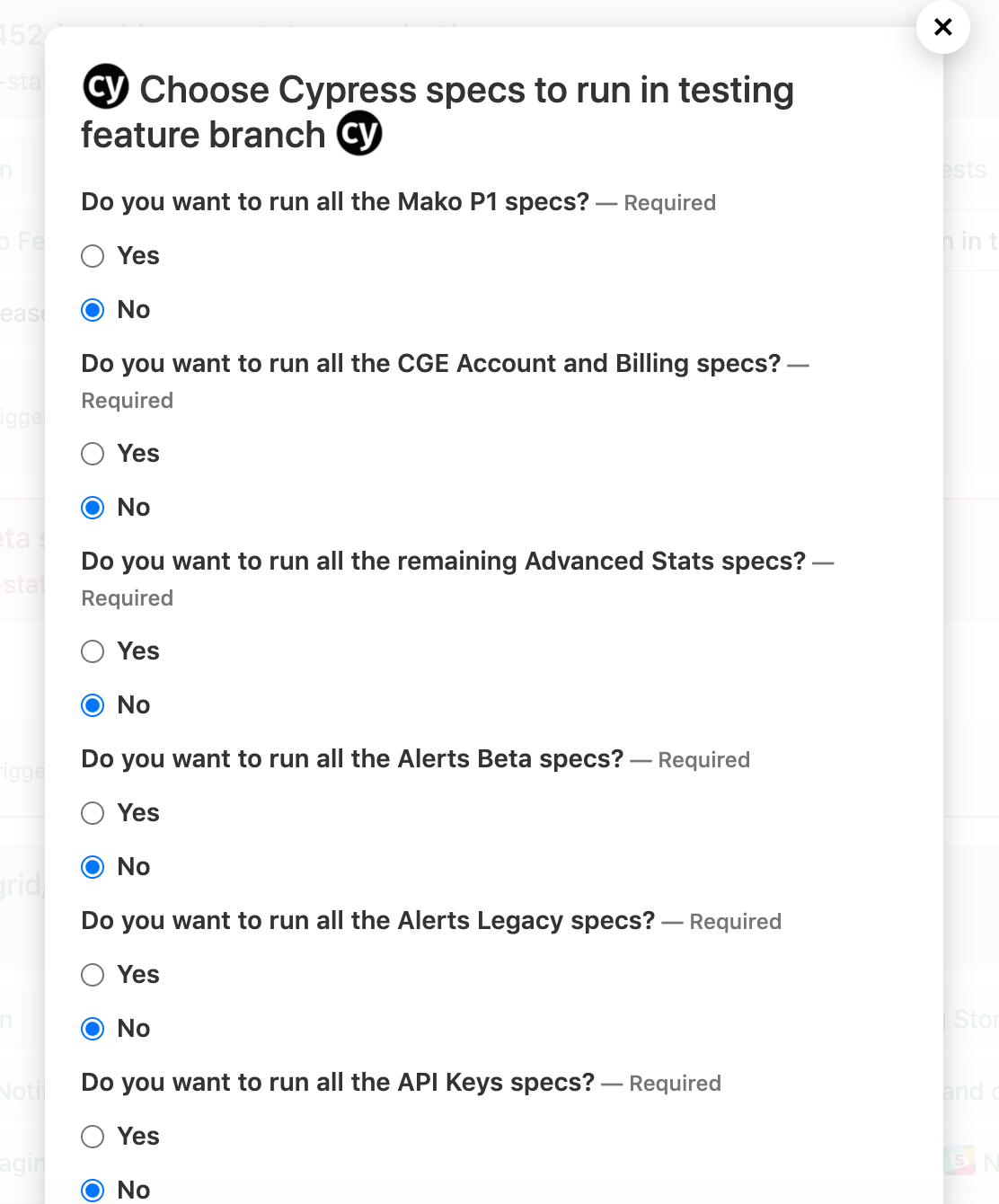

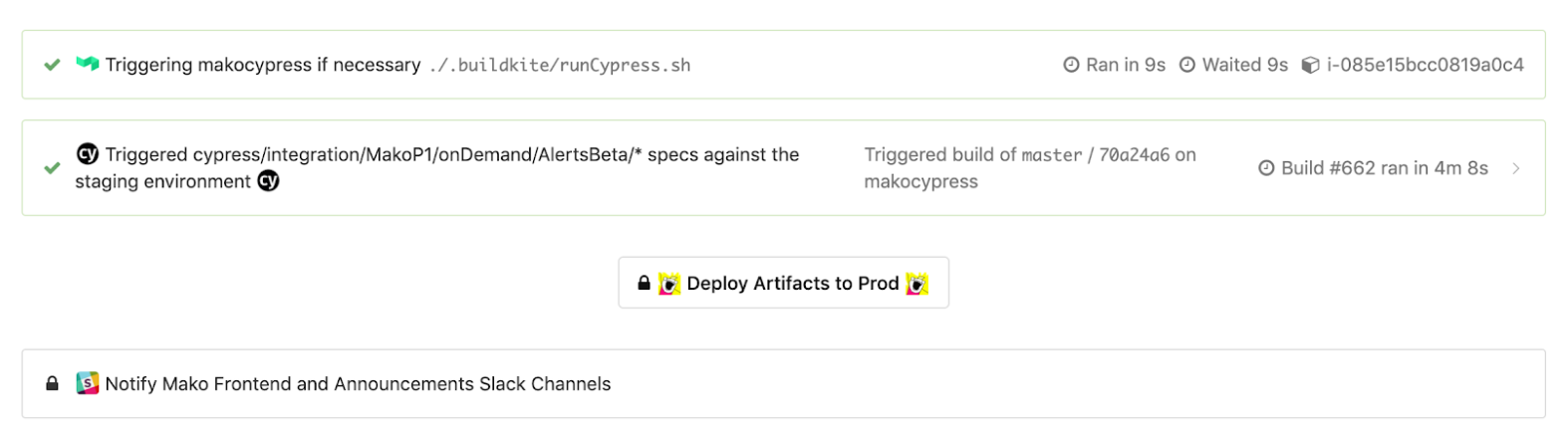

runCypress.sh to run after that select step to parse out the selected “Yes” or “No” values. We do this to form a list of comma-separated spec paths to run and append as an option, --spec , to our eventual Cypress command that runs in a Docker container in a triggered pipeline. We export environment variables such as the formed list of specs in “CYPRESS_SPECS” and the current test environment in “CYPRESS_TEST_ENV” to be used in the pipeline we are triggering at the end of script with buildkite-agent pipeline upload "$DIRNAME"/triggerCypress.yml.

runCypress.sh, we recall that script triggers the second pipeline to run by calling the triggerCypress.yml file with assigned environment variable values. The triggerCypress.yml file looks something like this. You’ll notice the “trigger” step and interpolation of values into the build messages are helpful for debugging and dynamic step names.

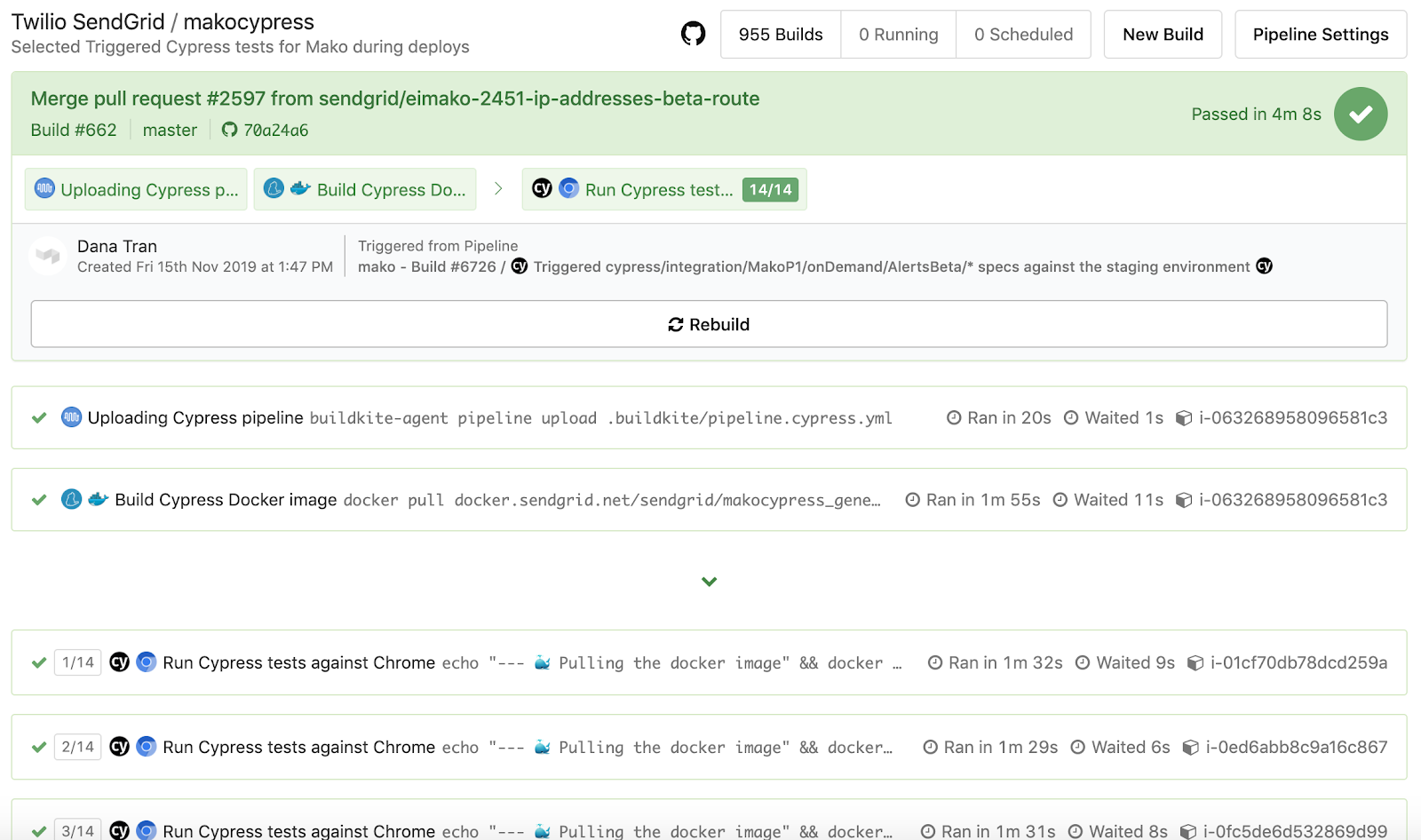

pipeline.cypress.yml file like so:

Dockerfile.cypress and docker-compose.cypress.yml use those environment variables exported from our pipelines to then use the proper Cypress command from our application’s package.json pointing to the right test environment and running the selected spec files. The snippets below show our general approach that you can expand on and improve to be more flexible.

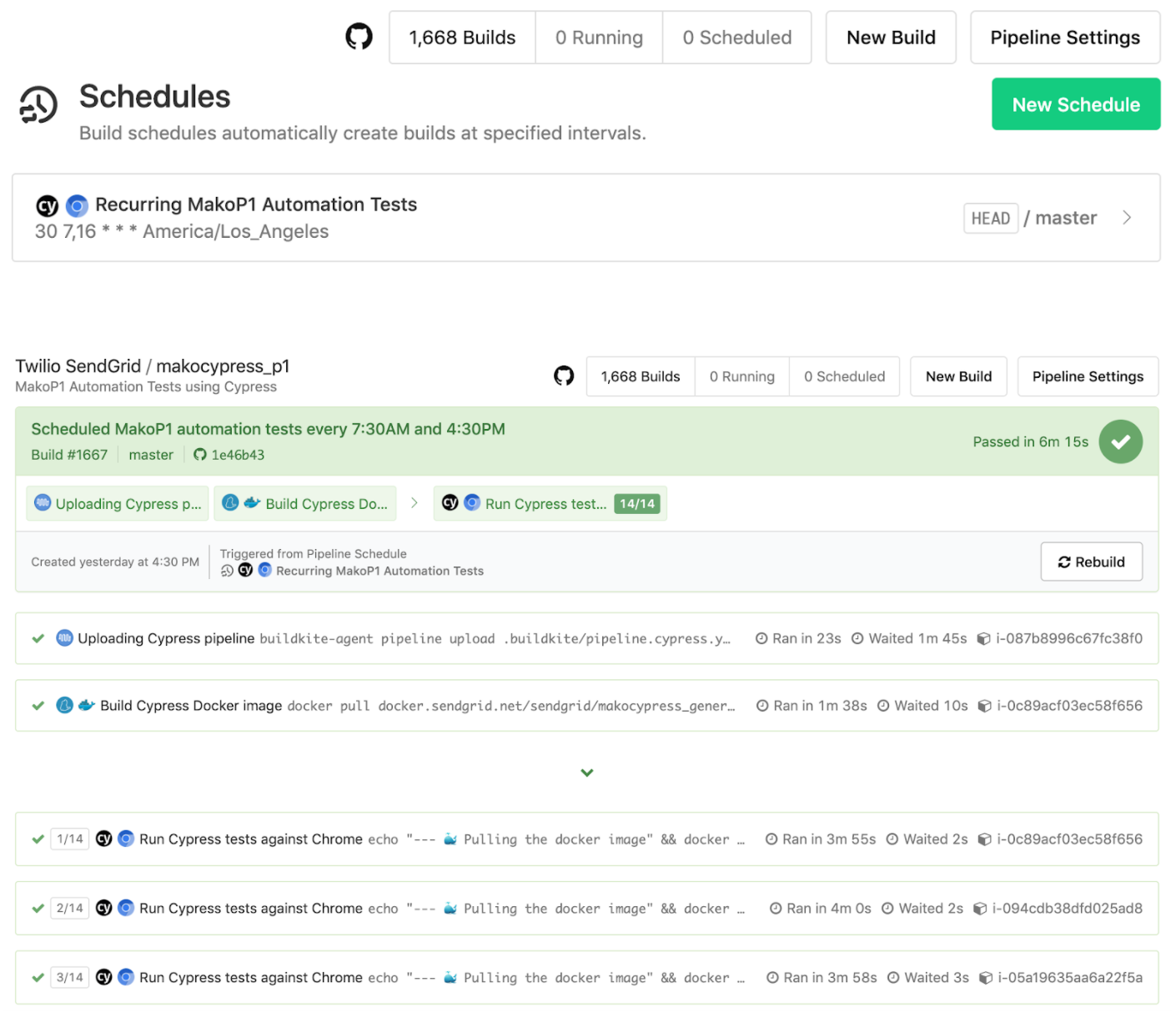

We were able to run over 200 tests in around 5 minutes for one of our application repos.It then spreads out all the Cypress tests to run in parallel across those machines while maintaining the recording of each of the tests for a specific build run. This boosted our test run times dramatically!

--ci-build-id option in addition to the parallel option so it knows which unique build run to associate with when parallelizing tests across machines.Set up your commands to be flexible and configurable based on environment variable values.Once you have your tests running in Docker with your CI provider (and if you pay for the Dashboard Service), you can take advantage of parallelizing your tests across multiple machines. You may have to modify existing tests and resources so they are not dependent on another to avoid any tests stomping on each other.

Partner with the email service trusted by developers and marketers for time-savings, scalability, and delivery expertise.